Flood PAHM Disruption - PAK | raster analysis#

Health Emergencies Preparedness and Response Program (HEPR)#

This notebook is a template workflow to collect data and prepare the main data to perform a baseline physical accessibility analysis to health facilities.

It uses various tools developed by the World Bank’s Geospatial Operations Support Team (GOST).

This notebook focuses on a raster-based implementation of market access, using the motorized Global Friction Surface from the Malaria Atlas Project.

Additionaly, it uses population data from World Pop (Unconstrained UN-Adjusted 2020, 1km resolution).

Data Download Links#

Setup#

Import packages required for the analysis

# System

import sys

import os

from os.path import join, expanduser

from pathlib import Path

# Avoid warnings to pop up

import warnings

warnings.filterwarnings('ignore')

# Visualization tools

# import folium as flm

import matplotlib.pyplot as plt

import matplotlib.colors as colors

import matplotlib.gridspec as gridspec

from rasterio.plot import plotting_extent

from rasterio.plot import show

from mpl_toolkits.axes_grid1 import make_axes_locatable

import contextily as ctx

import cartopy

import cartopy.crs as ccrs

import cartopy.feature as cfeature

import seaborn as sns

os.environ['CARTOPY_USER_BACKGROUNDS'] = '/home/jupyter-wb618081/Python/Backgrounds/'

# Processing

import numpy as np

import geopandas as gpd

import pandas as pd

from gadm import GADMDownloader

# Raster

import rasterio as rio

from rasterio.features import shapes

from shapely.geometry import box

from rasterio.features import geometry_mask

from rasterstats import zonal_stats

from shapely.geometry import Polygon, box, Point

from shapely.geometry import mapping

import skimage.graph as graph

from scipy.signal import convolve2d

# Graph

import pickle

import networkx as nx

import osmnx as ox

# for facebook data

from pyquadkey2 import quadkey

# Climate/Flood

# import xarray as xr

# Define your path to the Repositories

sys.path.append(join(expanduser("/home/jupyter-wb618081"), 'Repos', 'gostrocks', 'src'))

sys.path.append(join(expanduser("/home/jupyter-wb618081"), 'Repos', 'GOSTNets_Raster', 'src'))

sys.path.append(join(expanduser("/home/jupyter-wb618081"), 'Repos', 'GOSTnets'))

sys.path.append(join(expanduser("/home/jupyter-wb618081"), 'Repos', 'GOST_Urban', 'src', 'GOST_Urban'))

sys.path.append(join(expanduser("/home/jupyter-wb618081"), 'Repos', 'health-equity-diagnostics', 'src', 'modules'))

sys.path.append(join(expanduser("/home/jupyter-wb618081"), 'Repos', 'INFRA_SAP'))

import GOSTnets as gn

from GOSTnets.load_osm import *

import GOSTRocks.rasterMisc as rMisc

from GOSTRocks.misc import get_utm

import GOSTNetsRaster.market_access as ma

import UrbanRaster as urban

from infrasap import aggregator

from infrasap import osm_extractor as osm

from utils import download_osm_shapefiles

# auto reload

%load_ext autoreload

%autoreload 2

Define below the local folder where you are located

data_dir = join(expanduser("/home/jupyter-wb618081"), 'data')

scratch_dir = join(expanduser("/home/jupyter-wb618081"), 'Health-Access-Metrics')

out_path = join(expanduser("/home/jupyter-wb618081"), 'Health-Access-Metrics', 'Output')

## Function for creating a path, if needed ##

def checkDir(out_path):

if not os.path.exists(out_path):

os.makedirs(out_path)

Data Preparation#

Administrative boundaries#

epsg = "EPSG:4326"

epsg_utm = "EPSG:32736"

country = 'Pakistan'

iso = 'PAK'

downloader = GADMDownloader(version="4.0")

adm0 = downloader.get_shape_data_by_country_name(country_name=country, ad_level=0)

adm1 = downloader.get_shape_data_by_country_name(country_name=country, ad_level=1)

adm2 = downloader.get_shape_data_by_country_name(country_name=country, ad_level=2)

adm1.rename(columns={"NAME_1":"ADM1"}, inplace=True)

adm2.rename(columns={"NAME_1":"ADM1","NAME_2":"ADM2"}, inplace=True)

# iso = 'MWI'

# adm0_path = join(expanduser("R:/"), 'Data', 'GLOBAL/ADMIN', f'Admin0_Polys.shp')

# adm0 = gpd.read_file(adm0_path)

# adm0 = adm0[adm0["ISO3"] == "MWI"].to_crs(4326)

# iso = 'MWI'

# adm2_path = join(expanduser("R:/"), 'Data', 'GLOBAL/ADMIN', f'Admin2_Polys.shp')

# adm2 = gpd.read_file(adm2_path)

# adm2 = adm2[adm2["ISO3"] == "MWI"].to_crs(4326)

Population (origin)#

# wp_path = join(expanduser("R:/"), 'Data', 'GLOBAL/Population/WorldPop_PPP_2020/MOSAIC_ppp_prj_2020', f'ppp_prj_2020_{iso}.tif') # Download from link above

wp_path = join(data_dir, iso, f'pak_ppp_2020_1km_Aggregated.tif') # Download from link above

pop_surf = rio.open(wp_path)

Health Facilities (destinations)#

# BHU (Basic Health Unit)

# CD/GD (Civil Dispensary)

# RHC (Rural Health Center)

# SHC (Secondary Health Care)

hf_path = join(data_dir, iso, "health_facilities/", 'pakistan.csv')

df_hf = pd.read_csv(hf_path, header = 0)

df_hf = df_hf[["X", "Y", "osm_id", "amenity"]]; df_hf.rename(columns = {"osm_id": "ID", "amenity": "Facility Type"}, inplace = True)

display('The following categories and numbers of Health Facilities are considered to perform the analysis: ')

display(df_hf["Facility Type"].value_counts())

# Consider all health facilities and hospitals

df_hf_hosp = df_hf.loc[df_hf['Facility Type'] == "hospital"]

'The following categories and numbers of Health Facilities are considered to perform the analysis: '

Facility Type

hospital 1673

clinic 633

pharmacy 528

doctors 187

dentist 41

Name: count, dtype: int64

# Convert from pandas.Dataframe to Geopandas.dataframe

geodf_hf = gpd.GeoDataFrame(

df_hf, geometry=gpd.points_from_xy(df_hf.X, df_hf.Y), crs=epsg

)

geodf_hf_hosp = gpd.GeoDataFrame(

df_hf_hosp, geometry=gpd.points_from_xy(df_hf_hosp.X, df_hf_hosp.Y), crs=epsg

)

# Clean the geodf

geodf_hf = geodf_hf[['ID', 'Facility Type', 'geometry']]; #geodf_hf.loc[:, 'ID'] = df_hf.index

geodf_hf_hosp = geodf_hf_hosp[['ID', 'Facility Type', 'geometry']]; # geodf_hf_hosp.loc[:, 'ID'] = df_hf_hosp.index

geodf_hf = geodf_hf[~geodf_hf.geometry.is_empty]

geodf_hf_hosp = geodf_hf_hosp[~geodf_hf_hosp.geometry.is_empty]

Assure correspondence of ADM1 names in Health facilities and official Administrative Units

geodf_hf = gpd.sjoin(geodf_hf, adm1[["ADM1", "geometry"]], op='within', how='left')

geodf_hf.drop(columns = ["index_right"], inplace = True)

geodf_hf_hosp = gpd.sjoin(geodf_hf_hosp, adm1[["ADM1", "geometry"]], op='within', how='left')

geodf_hf_hosp.drop(columns = ["index_right"], inplace = True)

Flood#

Here, we import Fathom flood data (.tif) of fluvial floods with different return periods.

This data represent and mimic the climate impact on infrastructure and the disruption of the accessibility to health facilities.

# Import multiple rasterio .tif file as a dictionary

# Keys are return periods

# Values are rasterio arrays

# inland waters and oceans: 999

# not-flooded areas: -999 (Fluvial)

# not-flooded areas: 0 (Pluvial)

# Other values represent the flood depth (in m)

flood_fluvial_path = join(data_dir, iso,'FLOOD_SSBN','fluvial_undefended')

flood_pluvial_path = join(data_dir, iso,'FLOOD_SSBN','pluvial')

files=os.listdir(flood_fluvial_path)

flood_dict_fluvial = {}

for file in files:

key = file.split('_')[1].split('.')[0]

value = rio.open(join(flood_fluvial_path,file)) #.read(1)

flood_dict_fluvial[key] = value

files=os.listdir(flood_pluvial_path)

flood_dict_pluvial = {}

for file in files:

key = file.split('_')[1].split('.')[0]

value = rio.open(join(flood_pluvial_path,file)) #.read(1)

flood_dict_pluvial[key] = value

# Preserve the maximum flood depth

flood_dict = {}

for f,key in enumerate(flood_dict_pluvial.keys()):

out_flood_path = join(data_dir, iso,'FLOOD_SSBN', 'Fmax_' + key +'.tif')

if os.path.isfile(out_flood_path):

value = rio.open(out_flood_path)

flood_dict[key] = value

else:

out_meta = flood_dict_pluvial[key].meta

flood_max = np.fmax(flood_dict_fluvial[key].read(1),flood_dict_pluvial[key].read(1))

flood_dict[key] = flood_max

# flood_dict[key][flood_dict[key] == 0] = -999

# Write the output raster

out_flood_path = join(data_dir, iso,'FLOOD_SSBN', 'Fmax_' + key +'.tif')

with rio.open(out_flood_path, 'w', **out_meta) as dst:

dst.write(flood_max, 1)

# Read the output raster

value = rio.open(out_flood_path)

flood_dict[key] = value

# Free up memory

for f,key in enumerate(flood_dict.keys()):

del flood_dict_fluvial[key]

del flood_dict_pluvial[key]

Friction Surface#

Process the travel cost surface from the Malaria Atlas Project, clip the raster to our region of interest.

# Only the first time, clip the travel friction surface to the country of interest

out_travel_surface = join(data_dir, iso, f"travel_surface_motorized_{iso}.tif")

if not os.path.isfile(out_travel_surface):

gfs_path = join(data_dir, '2020_motorized_friction_surface.geotiff')

gfs_rio = rio.open(gfs_path)

rMisc.clipRaster(gfs_rio, adm0, out_travel_surface, crop=False)

# Import the clipped friction surface

travel_surf = rio.open(out_travel_surface) #.read(1)

print(travel_surf.res)

print(pop_surf.res)

(0.008333333333333333, 0.008333333333333333)

(0.0083333333, 0.0083333333)

Preprocessing#

Align the POPULATION & FLOOD raster to the friction surface, ensuring that they have the same extent and resolution.

# If the Standardized data are already present, skip, else generate them

def checkDir(out_path):

if not os.path.exists(out_path):

os.makedirs(out_path)

checkDir(join(out_path, iso))

out_pop_surface_std = join(out_path, iso, "WP_2020_1km_STD.tif")

if not os.path.isfile(out_pop_surface_std):

rMisc.standardizeInputRasters(pop_surf, travel_surf, out_pop_surface_std, resampling_type="nearest")

checkDir(join(out_path, iso, "flood"))

for f,key in enumerate(flood_dict.keys()):

# out_flood_path = join(data_dir, iso,'FLOOD_SSBN', 'Fmax_' + key)

out_flood_std = join(out_path, iso, 'flood', "STD_" + 'Fmax_'+ key +'.tif')

if os.path.isfile(out_flood_std):

None

else:

rMisc.standardizeInputRasters(flood_dict[key], travel_surf, out_flood_std, resampling_type="nearest")

Correct the reprojected FLOOD raster extension, ensuring that lands are not covered by inland and open waters

# Import multiple rasterio .tif file as a dictionary

# Keys are return periods

# Values are rasterio arrays

flood_path = join(out_path, iso, 'flood')

files=os.listdir(flood_path)

flood_dict_std = {}

for file in files:

if file.startswith('STD') == True:

key = file.split('_')[2].split('.')[0]

value = rio.open(join(flood_path,file)) #.read(1)

flood_dict_std[key] = value

display(flood_dict_std["1in10"].read_crs())

CRS.from_epsg(4326)

Origins#

Prepare a standard grid (pandas.Dataframe) using each cell from the 1km World Pop raster.

pop_surf = rio.open(out_pop_surface_std)

pop = pop_surf.read(1, masked=False)

# Create a population df from population surface

indices = list(np.ndindex(pop.shape))

xys = [Point(pop_surf.xy(ind[0], ind[1])) for ind in indices]

res_df = pd.DataFrame({

'spatial_index': indices,

'xy': xys,

'pop': pop.flatten()

})

res_df['pointid'] = res_df.index

res_df

| spatial_index | xy | pop | pointid | |

|---|---|---|---|---|

| 0 | (0, 0) | POINT (60.89583333333332 37.09583333333333) | 8.048295 | 0 |

| 1 | (0, 1) | POINT (60.904166666666654 37.09583333333333) | 7.362457 | 1 |

| 2 | (0, 2) | POINT (60.91249999999999 37.09583333333333) | 9.171885 | 2 |

| 3 | (0, 3) | POINT (60.92083333333332 37.09583333333333) | 9.375657 | 3 |

| 4 | (0, 4) | POINT (60.92916666666665 37.09583333333333) | 8.939575 | 4 |

| ... | ... | ... | ... | ... |

| 3272275 | (1607, 2030) | POINT (77.81249999999999 23.704166666666666) | 212.114243 | 3272275 |

| 3272276 | (1607, 2031) | POINT (77.82083333333333 23.704166666666666) | 141.163818 | 3272276 |

| 3272277 | (1607, 2032) | POINT (77.82916666666665 23.704166666666666) | 128.055283 | 3272277 |

| 3272278 | (1607, 2033) | POINT (77.83749999999998 23.704166666666666) | 100.614090 | 3272278 |

| 3272279 | (1607, 2034) | POINT (77.84583333333332 23.704166666666666) | 86.039619 | 3272279 |

3272280 rows × 4 columns

Flood impact on Health facilities#

Consider the raw Fmax flood rasters, thus exploiting the original FATHOM dataset resolution

For every Standardized Flood layer (Return Period), extract Flood Depth on Health Facilities location

# Extract flood depth at every facility location

for key in flood_dict.keys():

coords = [(x,y) for x, y in zip(geodf_hf_hosp.geometry.x, geodf_hf_hosp.geometry.y)]

geodf_hf_hosp[key] = [x[0] for x in flood_dict[key].sample(coords)]

coords = [(x,y) for x, y in zip(geodf_hf.geometry.x, geodf_hf.geometry.y)]

geodf_hf[key] = [x[0] for x in flood_dict[key].sample(coords)]

# Identify Flooded and not Flooded Facilities

flood_geodf_hf_hosp = {}

dry_geodf_hf_hosp = {}

flood_geodf_hf = {}

dry_geodf_hf = {}

for key in flood_dict.keys():

flood_geodf_hf_hosp[key] = geodf_hf_hosp[geodf_hf_hosp[key] > 0.2]

dry_geodf_hf_hosp[key] = geodf_hf_hosp[geodf_hf_hosp[key] == 0]

flood_geodf_hf[key] = geodf_hf[geodf_hf[key] > 0.2]

dry_geodf_hf[key] = geodf_hf[geodf_hf[key] == 0]

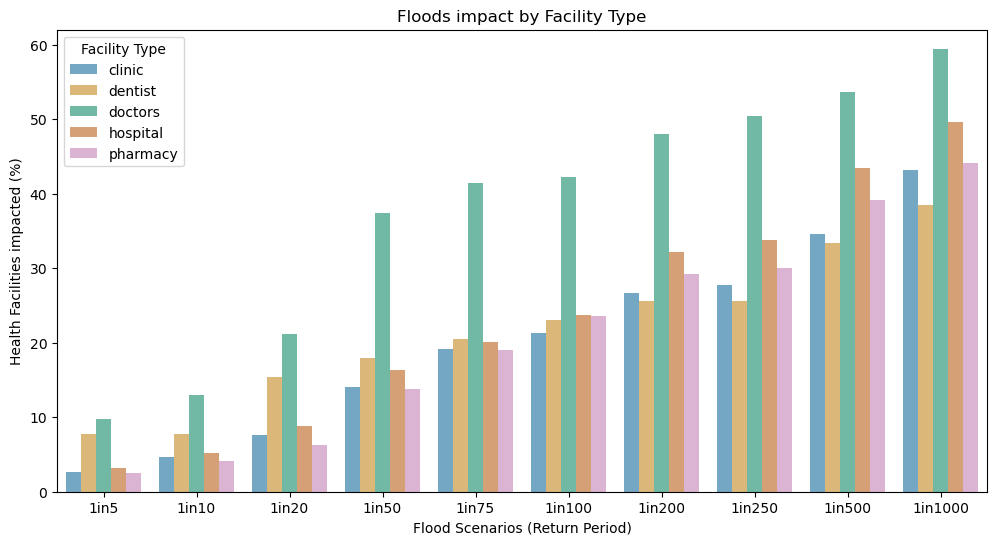

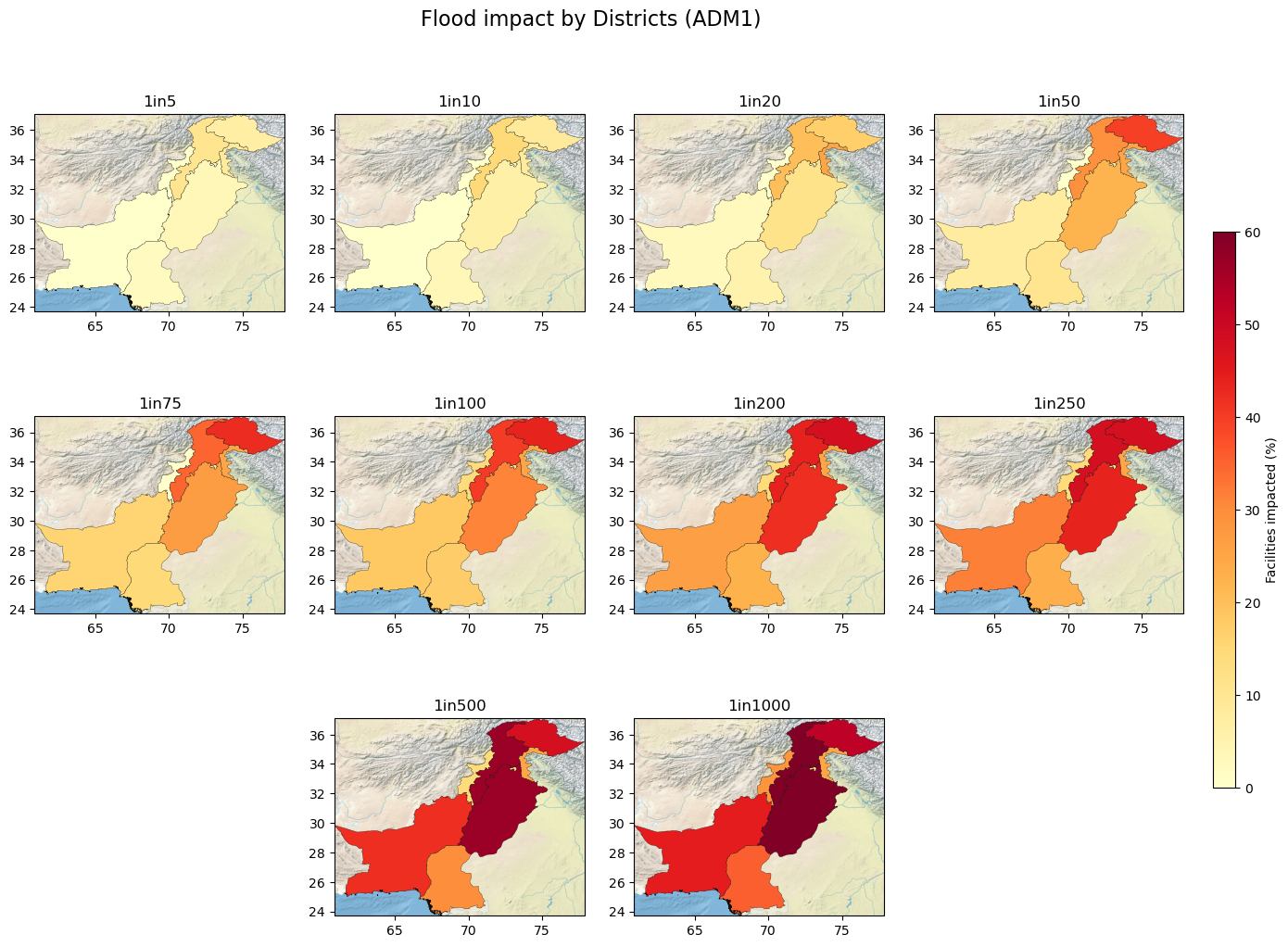

Summary statistics#

For every Flood Scenario (RP):

Number and % of Health facilities disrupted by Facility Type

Number and % of Health facilities disrupted by ADM1 Districts

# Reorder Flood Return Periods in geodf columns

def sort_flood_col(gdf, str_start, str_sep):

# Define str_start and str_sep as the strings that identify the start of column names and the separator with the numerical value

fd_columns = [col for col in gdf.columns if col.startswith(str_start)]

non_fd_columns = [col for col in gdf.columns if not col.startswith(str_start)]

fd_num = [(int(col.split(str_sep)[1]), col) for col in fd_columns]

fd_col_sort = [col for _, col in sorted(fd_num)]

new_column_order = non_fd_columns + fd_col_sort

# Reorder the DataFrame columns

gdf = gdf[new_column_order]

return(gdf)

geodf_hf = sort_flood_col(geodf_hf, "1in", "in")

geodf_hf_hosp = sort_flood_col(geodf_hf_hosp, "1in", "in")

# % of Facilities disrupted, by Facility Type

stats1 = geodf_hf[geodf_hf != 0].groupby("Facility Type").count().drop(columns = ["ADM1", "geometry"]).rename(columns={'ID': 'Total n°'})

fd_columns = [col for col in stats1.columns if col.startswith("1in")]

for col in fd_columns:

stats1[col] = (stats1[col]/stats1["Total n°"])*100

stats1[fd_columns] = stats1[fd_columns].round(2)

display(stats1)

| Total n° | 1in5 | 1in10 | 1in20 | 1in50 | 1in75 | 1in100 | 1in200 | 1in250 | 1in500 | 1in1000 | |

|---|---|---|---|---|---|---|---|---|---|---|---|

| Facility Type | |||||||||||

| clinic | 596 | 2.68 | 4.70 | 7.55 | 14.09 | 19.13 | 21.31 | 26.68 | 27.68 | 34.56 | 43.12 |

| dentist | 39 | 7.69 | 7.69 | 15.38 | 17.95 | 20.51 | 23.08 | 25.64 | 25.64 | 33.33 | 38.46 |

| doctors | 123 | 9.76 | 13.01 | 21.14 | 37.40 | 41.46 | 42.28 | 47.97 | 50.41 | 53.66 | 59.35 |

| hospital | 1352 | 3.25 | 5.18 | 8.88 | 16.27 | 20.04 | 23.74 | 32.17 | 33.80 | 43.42 | 49.63 |

| pharmacy | 506 | 2.57 | 4.15 | 6.32 | 13.83 | 18.97 | 23.52 | 29.25 | 30.04 | 39.13 | 44.07 |

stats1_long = pd.melt(stats1.reset_index().drop(columns = "Total n°"), id_vars="Facility Type", var_name="scen")

scen = stats1_long.scen.unique()

# % of Facilities disrupted, by ADM1 (Districts)

stats2 = geodf_hf[geodf_hf != 0].groupby("ADM1").count().drop(columns = ["Facility Type", "geometry"]).rename(columns={'ID': 'Total n°'})

fd_columns = [col for col in stats2.columns if col.startswith("1in")]

for col in fd_columns:

stats2[col] = (stats2[col]/stats2["Total n°"])*100

stats2[fd_columns] = stats2[fd_columns].round(2)

# Merge with ADM1 geometries

stats2 = stats2.merge(adm1[["ADM1", "geometry"]], on='ADM1', how='left').set_index("ADM1")

stats2 =gpd.GeoDataFrame(stats2, geometry=stats2["geometry"], crs="EPSG:4326")

display(stats2)

| Total n° | 1in5 | 1in10 | 1in20 | 1in50 | 1in75 | 1in100 | 1in200 | 1in250 | 1in500 | 1in1000 | geometry | |

|---|---|---|---|---|---|---|---|---|---|---|---|---|

| ADM1 | ||||||||||||

| Azad Kashmir | 12 | 8.33 | 8.33 | 25.00 | 25.00 | 25.00 | 25.00 | 25.00 | 25.00 | 25.00 | 25.00 | MULTIPOLYGON (((73.82016 33.01731, 73.78817 33... |

| Balochistan | 38 | 0.00 | 0.00 | 2.63 | 7.89 | 15.79 | 18.42 | 26.32 | 31.58 | 42.11 | 44.74 | MULTIPOLYGON (((63.85792 25.12653, 63.85792 25... |

| Federally Administered Tribal Areas | 7 | 0.00 | 0.00 | 0.00 | 0.00 | 0.00 | 14.29 | 14.29 | 14.29 | 14.29 | 28.57 | MULTIPOLYGON (((70.19426 31.09286, 70.18851 31... |

| Gilgit-Baltistan | 71 | 7.04 | 8.45 | 16.90 | 39.44 | 42.25 | 43.66 | 47.89 | 47.89 | 47.89 | 52.11 | MULTIPOLYGON (((75.20869 34.85907, 75.18998 34... |

| Islamabad | 145 | 1.38 | 1.38 | 2.76 | 6.21 | 6.90 | 6.90 | 10.34 | 10.34 | 13.10 | 15.17 | MULTIPOLYGON (((72.88009 33.75444, 72.90147 33... |

| Khyber-Pakhtunkhwa | 164 | 10.37 | 14.63 | 20.12 | 29.27 | 34.76 | 40.24 | 43.90 | 48.17 | 56.71 | 60.98 | MULTIPOLYGON (((70.33557 31.35058, 70.32341 31... |

| Punjab | 934 | 3.64 | 6.21 | 11.46 | 22.06 | 26.77 | 31.05 | 41.97 | 43.79 | 56.64 | 65.42 | MULTIPOLYGON (((70.96970 27.72655, 70.96156 27... |

| Sindh | 1267 | 2.29 | 3.71 | 5.45 | 10.34 | 14.68 | 17.52 | 22.57 | 23.28 | 29.83 | 35.52 | MULTIPOLYGON (((67.40986 24.00458, 67.40986 24... |

Map HF Impact Results#

fig = plt.figure(figsize=(12, 6))

ax0 = fig.add_subplot(111)

title = "Floods impact by Facility Type"

ax0.set_title(title)

ax0.set_xticklabels(scen)

ax0.set_xlabel("Flood Scenarios (Return Period)")

ax0.set_ylabel("Health Facilities impacted (%)")

ax0.set_ylim(0,62)

ax0.legend(loc='upper left', fontsize = 10, title = "Facility type")

ax0 = sns.barplot(

data=stats1_long, hue="Facility Type",# errorbar=("pi", 50),

x="scen", y="value",

palette='colorblind', alpha=.6,

)

plt.savefig(join(out_path, iso, title + ".png"), dpi=300, bbox_inches='tight', facecolor='white')

No artists with labels found to put in legend. Note that artists whose label start with an underscore are ignored when legend() is called with no argument.

figsize = (16, 12)

fig = plt.figure(figsize=figsize)

projection = ccrs.PlateCarree()

gs = gridspec.GridSpec(3, 4)

title = "Flood impact by Districts (ADM1)"

fig.suptitle(title, size=16, y=0.95)

# Define the colormap

cmap = plt.get_cmap('YlOrRd')

# Define the normalization from 0 to 45

norm = colors.Normalize(vmin=0, vmax=60)

for i, flood in enumerate(scen):

if i < 4:

ax = fig.add_subplot(gs[0, i], projection=projection)

if ((i > 3) and (i < 8)):

ax = fig.add_subplot(gs[1, i-4], projection=projection)

if i > 7:

ax = fig.add_subplot(gs[2, i-7], projection=projection)

ax.set_title(flood)

ax.get_xaxis().set_visible(True)

ax.get_yaxis().set_visible(True)

# Plot the data

stats2.plot(

ax=ax, column=flood, cmap=cmap, legend=False,

alpha=1, linewidth=0.2, edgecolor='black',

norm=norm

)

ax.background_img(name='NaturalEarthRelief', resolution='high', extent = [adm0.bounds.minx[0], adm0.bounds.maxx[0], adm0.bounds.miny[0], adm0.bounds.maxy[0]])

# Add a colorbar to the figure, adjusting its position via `fig.add_axes`

cax = fig.add_axes([0.92, 0.25, 0.015, 0.5]) # Adjust the position and size of the colorbar here

sm = plt.cm.ScalarMappable(cmap=cmap, norm=norm)

sm.set_array([])

fig.colorbar(sm, cax=cax, label="Facilities impacted (%)")

plt.show()

plt.savefig(join(out_path, iso, title + ".png"), dpi=300, bbox_inches='tight', facecolor='white')

<Figure size 640x480 with 0 Axes>

Flood impact on Friction Surface#

Consider the Standardized Fmax flood rasters, thus exploiting the original FATHOM dataset resolution

We consider different degrees of accessibility/mobility disruption according to different flood depth (FD) levels

If the FD is less than 0.3 meters, than accessibility is preserved but signifcantly impacted (slowered)

If the FD is higher than 0.3 meters, than mobility is interrupted on that specific pathway

# Import multiple rasterio .tif file as a dictionary

# Keys are return periods

# Values are rasterio arrays

flood_path = join(out_path, iso, 'flood')

files=os.listdir(flood_path)

flood_dict_std = {}

for file in files:

if file.startswith('STD') == True:

key = file.split('_')[2].split('.')[0]

value = rio.open(join(flood_path,file)) #.read(1)

flood_dict_std[key] = value

display(flood_dict_std["1in10"].read_crs())

CRS.from_epsg(4326)

# Create weights for the Friction Surface

# If water level is less than 30 cm, accessibility is affected (from pregnolato et al., (2017))

# If water level is more than 30 cm, accessibility is disrupted (travel time *50)

def friction_flood_weight(flood_rio):

''' Generate flood derived weights for the friction surface

INPUTS

flood_rio [rasterio] - raster with flood depths from which to generate weights

RETURNS

flood_rio [rasterio] - raster with weights

'''

from scipy.interpolate import interp1d

# Function for scale Flood Depth array between 1 (min) and 0 (max)

def scale_array(arr):

min_val = np.min(arr)

max_val = np.max(arr)

scaled_arr = (arr - min_val) / (max_val - min_val)

scaled_arr = 1 - scaled_arr # Invert the scaled values

return scaled_arr

flood_rio = flood_rio.read(1)

flood_rio_orig = flood_rio.copy()

# Apply the quadratic (parabolic) relationship from Pregnolato et al., (2017)

# Describes the vehicles max speed according to Flood Depth values (in mm)

flood_arr = flood_rio[(flood_rio_orig > 0) & (flood_rio_orig < 0.3)]*1000 # flood depth from m to mm

depth_val = np.arange(1,300,0.1) # Define discrete Flood Depth values between 1 mm and 300 mm

func = scale_array(9*10**(-4)*(depth_val**2) - 0.5529*depth_val + 86.9448) # eq.4 from Pregnolato et al., (2017)

# Scale the function with the maximum vehicles speed

func = func*func.max()**-1

interp_func = interp1d(depth_val, func, kind='linear', fill_value='extrapolate')

flood_arr_weight = interp_func(flood_arr)

# Flood Depth < 0 or 999 (open waters) -> 0

# Flood Depth > 30 cm -> 50

# Flood Depth > 0 & < 30 cm -> inverse of quadratic function (between 1 and 20 circa)

flood_rio[(flood_rio_orig > 0) & (flood_rio_orig < 0.3)] = 1/flood_arr_weight

flood_rio[(flood_rio_orig <= 0) | (flood_rio_orig == 999)] = 1

flood_rio[(flood_rio_orig >= 0.3) & (flood_rio_orig < 999)] = 50

# To ensure that weights are always >1 (avoid decresing travel time)

flood_rio[(flood_rio <1)] = 1

del flood_rio_orig

return flood_rio

flood_dict_fd = flood_dict_std.copy()

for key in flood_dict_fd.keys():

flood_dict_fd[key] = friction_flood_weight(flood_dict_fd[key])

Create new Friction Surface that accounts for Floods disruption

# Weight the Friction Surface with the discrete flood depth array

travel_surf_flood = dict()

for key in flood_dict_fd.keys():

travel_surf_flood[key] = travel_surf.read(1) * flood_dict_fd[key]

Create an MCP graph object from the flooded friction surfaces.

# convert friction surface to traversal time (lazily). Original data are minutes to travel 1 m:

# We convert it to minutes to cross the cell (1000m). This could be revised

inG_data = dict()

inG_data["baseline"] = travel_surf.read(1) * 1000

for key in travel_surf_flood.keys():

inG_data[key] = travel_surf_flood[key] * 1000

# Correct no data values. Not needed but good to check

# inG_data[inG_data < 0] = 99999999

# inG_data[inG_data < 0] = np.nan

mcp = dict()

mcp["baseline"] = graph.MCP_Geometric(inG_data["baseline"])

for key in inG_data.keys():

mcp[key] = graph.MCP_Geometric(inG_data[key])

# Check descriptive statistics

print(f"Mean: {np.mean(inG_data['1in5'])}")

print(f"Max: {np.max(inG_data['1in5'])}")

print(f"Min: {np.min(inG_data['1in5'])}")

print(f"Std: {np.std(inG_data['1in5'])}")

Mean: 41.748477935791016

Max: 22088.69921875

Min: 0.5

Std: 201.65882873535156

Data analysis#

Indicators of interest

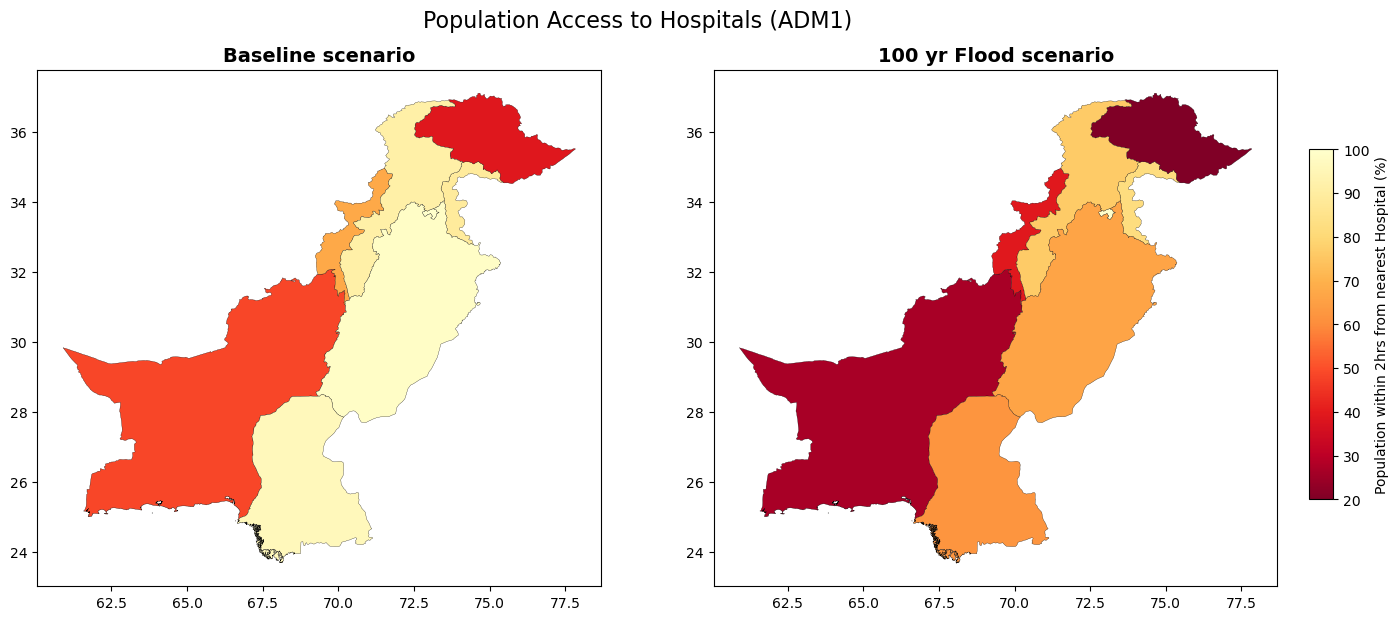

Percentage of population within 2h of driving to the nearest primary care facility (population level, and by SES quintile).

Percentage of population within 2h of driving to the nearest district hospital (population, and by SES quintile).

Percentage of health facilities with direct access to an all season road.

Percentage of health facilities within 2km of an all season road.

# Calculate the travel time from each grid-cell to the nearest not-flooded destination

# According to the Flood scenario, we'll a different number of flooded facilities

res_hf = dict()

res_hf_hosp = dict()

res_hf["baseline"] = ma.calculate_travel_time(travel_surf, mcp["baseline"], geodf_hf)[0]

res_hf_hosp["baseline"] = ma.calculate_travel_time(travel_surf, mcp["baseline"], geodf_hf_hosp)[0]

for key in travel_surf_flood.keys():

res_hf[key] = ma.calculate_travel_time(travel_surf, mcp[key], dry_geodf_hf[key])[0]

res_hf_hosp[key] = ma.calculate_travel_time(travel_surf, mcp[key], dry_geodf_hf_hosp[key])[0]

# Check dimension

display(len(res_hf[key].flatten()), len(dry_geodf_hf_hosp[key]))

# Consider all facilities and Hospitals

res_df.loc[:, 'tt_hf_' + "baseline"] = res_hf["baseline"].flatten()

res_df.loc[:, 'tt_hosp_' + "baseline"] = res_hf_hosp["baseline"].flatten()

for key in travel_surf_flood.keys():

res_df.loc[:, 'tt_hf_' + key] = res_hf[key].flatten()

res_df.loc[:, 'tt_hosp_' + key] = res_hf_hosp[key].flatten()

# remove values where pop is 0 or nan

res_df = res_df.loc[res_df['pop']!=0].copy()

res_df = res_df.loc[~(res_df['pop'].isna())].copy()

res_df.loc[:,'xy'] = res_df.loc[:,'xy'].apply(lambda x: Point(x))

res_df.head(2)

3272280

917

| spatial_index | xy | pop | pointid | tt_hf_baseline | tt_hosp_baseline | tt_hf_1in100 | tt_hosp_1in100 | tt_hf_1in1000 | tt_hosp_1in1000 | ... | tt_hf_1in250 | tt_hosp_1in250 | tt_hf_1in20 | tt_hosp_1in20 | tt_hf_1in5 | tt_hosp_1in5 | tt_hf_1in50 | tt_hosp_1in50 | tt_hf_1in200 | tt_hosp_1in200 | |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

| 0 | (0, 0) | POINT (60.89583333333332 37.09583333333333) | 8.048295 | 0 | 1342.468337 | 1342.468337 | 1450.565676 | 1450.565676 | 1631.173022 | 1631.173022 | ... | 1478.206322 | 1478.206322 | 1361.230591 | 1361.230591 | 1348.986713 | 1348.986713 | 1370.691651 | 1370.691651 | 1471.091321 | 1471.091321 |

| 1 | (0, 1) | POINT (60.904166666666654 37.09583333333333) | 7.362457 | 1 | 1347.846581 | 1347.846581 | 1455.943920 | 1455.943920 | 1636.551267 | 1636.551267 | ... | 1483.584567 | 1483.584567 | 1366.608835 | 1366.608835 | 1354.364958 | 1354.364958 | 1376.069895 | 1376.069895 | 1476.469565 | 1476.469565 |

2 rows × 26 columns

Create Geodataframe for the population grid

res_gdf = gpd.GeoDataFrame(res_df, geometry='xy', crs=epsg)

res_gdf.rename(columns={'xy':'geometry'}, inplace=True)

res_gdf.set_geometry('geometry', inplace=True)

# convert travel time from minutes to hours

for col in res_gdf.columns:

if 'tt_' in col:

res_gdf.loc[:, col] = res_gdf.loc[:, col] / 60

res_gdf.head(2)

| spatial_index | geometry | pop | pointid | tt_hf_baseline | tt_hosp_baseline | tt_hf_1in100 | tt_hosp_1in100 | tt_hf_1in1000 | tt_hosp_1in1000 | ... | tt_hf_1in250 | tt_hosp_1in250 | tt_hf_1in20 | tt_hosp_1in20 | tt_hf_1in5 | tt_hosp_1in5 | tt_hf_1in50 | tt_hosp_1in50 | tt_hf_1in200 | tt_hosp_1in200 | |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

| 0 | (0, 0) | POINT (60.89583 37.09583) | 8.048295 | 0 | 22.374472 | 22.374472 | 24.176095 | 24.176095 | 27.186217 | 27.186217 | ... | 24.636772 | 24.636772 | 22.687177 | 22.687177 | 22.483112 | 22.483112 | 22.844861 | 22.844861 | 24.518189 | 24.518189 |

| 1 | (0, 1) | POINT (60.90417 37.09583) | 7.362457 | 1 | 22.464110 | 22.464110 | 24.265732 | 24.265732 | 27.275854 | 27.275854 | ... | 24.726409 | 24.726409 | 22.776814 | 22.776814 | 22.572749 | 22.572749 | 22.934498 | 22.934498 | 24.607826 | 24.607826 |

2 rows × 26 columns

Save results as raster

# Checking if files are already saved in the folder

for col in res_gdf.columns:

# Health facilities Travel Time surfaces

if "tt_hf_baseline" in col:

file = join(out_path, iso, "tt_hf_min_motorized_friction_" + col.split('_')[2] + ".tif")

if not os.path.isfile(file):

print("Saving " + file)

rMisc.rasterizeDataFrame(

inD = res_gdf,

outFile = file,

idField = col,

templateRaster = out_travel_surface

)

if 'tt_hf_1in' in col:

file = join(out_path, iso, "tt_hf_min_motorized_friction_flood_" + col.split('_')[2] + ".tif")

if not os.path.isfile(file):

print("Saving " + file)

rMisc.rasterizeDataFrame(

inD = res_gdf,

outFile = file,

idField = col,

templateRaster = out_travel_surface

)

else:

print(file.split('/')[6] + " already present")

# Hospitals Travel Time surfaces

if "tt_hosp_baseline" in col:

file = join(out_path, iso, "tt_hospitals_min_motorized_friction_" + col.split('_')[2] + ".tif")

if not os.path.isfile(file):

print("Saving " + file)

rMisc.rasterizeDataFrame(

inD = res_gdf,

outFile = file,

idField = col,

templateRaster = out_travel_surface

)

if 'tt_hosp_1in' in col:

file = join(out_path, iso, "tt_hospitals_min_motorized_friction_flood_" + col.split('_')[2] + ".tif")

if not os.path.isfile(file):

print("Saving " + file)

rMisc.rasterizeDataFrame(

inD = res_gdf,

outFile = file,

idField = col,

templateRaster = out_travel_surface

)

else:

print(file.split('/')[6] + " already present")

Saving /home/jupyter-wb618081/Health-Access-Metrics/Output/PAK/tt_hf_min_motorized_friction_baseline.tif

Saving /home/jupyter-wb618081/Health-Access-Metrics/Output/PAK/tt_hospitals_min_motorized_friction_baseline.tif

Saving /home/jupyter-wb618081/Health-Access-Metrics/Output/PAK/tt_hf_min_motorized_friction_flood_1in100.tif

Saving /home/jupyter-wb618081/Health-Access-Metrics/Output/PAK/tt_hospitals_min_motorized_friction_flood_1in100.tif

Saving /home/jupyter-wb618081/Health-Access-Metrics/Output/PAK/tt_hf_min_motorized_friction_flood_1in1000.tif

Saving /home/jupyter-wb618081/Health-Access-Metrics/Output/PAK/tt_hospitals_min_motorized_friction_flood_1in1000.tif

Saving /home/jupyter-wb618081/Health-Access-Metrics/Output/PAK/tt_hf_min_motorized_friction_flood_1in75.tif

Saving /home/jupyter-wb618081/Health-Access-Metrics/Output/PAK/tt_hospitals_min_motorized_friction_flood_1in75.tif

Saving /home/jupyter-wb618081/Health-Access-Metrics/Output/PAK/tt_hf_min_motorized_friction_flood_1in500.tif

Saving /home/jupyter-wb618081/Health-Access-Metrics/Output/PAK/tt_hospitals_min_motorized_friction_flood_1in500.tif

Saving /home/jupyter-wb618081/Health-Access-Metrics/Output/PAK/tt_hf_min_motorized_friction_flood_1in10.tif

Saving /home/jupyter-wb618081/Health-Access-Metrics/Output/PAK/tt_hospitals_min_motorized_friction_flood_1in10.tif

Saving /home/jupyter-wb618081/Health-Access-Metrics/Output/PAK/tt_hf_min_motorized_friction_flood_1in250.tif

Saving /home/jupyter-wb618081/Health-Access-Metrics/Output/PAK/tt_hospitals_min_motorized_friction_flood_1in250.tif

Saving /home/jupyter-wb618081/Health-Access-Metrics/Output/PAK/tt_hf_min_motorized_friction_flood_1in20.tif

Saving /home/jupyter-wb618081/Health-Access-Metrics/Output/PAK/tt_hospitals_min_motorized_friction_flood_1in20.tif

Saving /home/jupyter-wb618081/Health-Access-Metrics/Output/PAK/tt_hf_min_motorized_friction_flood_1in5.tif

Saving /home/jupyter-wb618081/Health-Access-Metrics/Output/PAK/tt_hospitals_min_motorized_friction_flood_1in5.tif

Saving /home/jupyter-wb618081/Health-Access-Metrics/Output/PAK/tt_hf_min_motorized_friction_flood_1in50.tif

Saving /home/jupyter-wb618081/Health-Access-Metrics/Output/PAK/tt_hospitals_min_motorized_friction_flood_1in50.tif

Saving /home/jupyter-wb618081/Health-Access-Metrics/Output/PAK/tt_hf_min_motorized_friction_flood_1in200.tif

Saving /home/jupyter-wb618081/Health-Access-Metrics/Output/PAK/tt_hospitals_min_motorized_friction_flood_1in200.tif

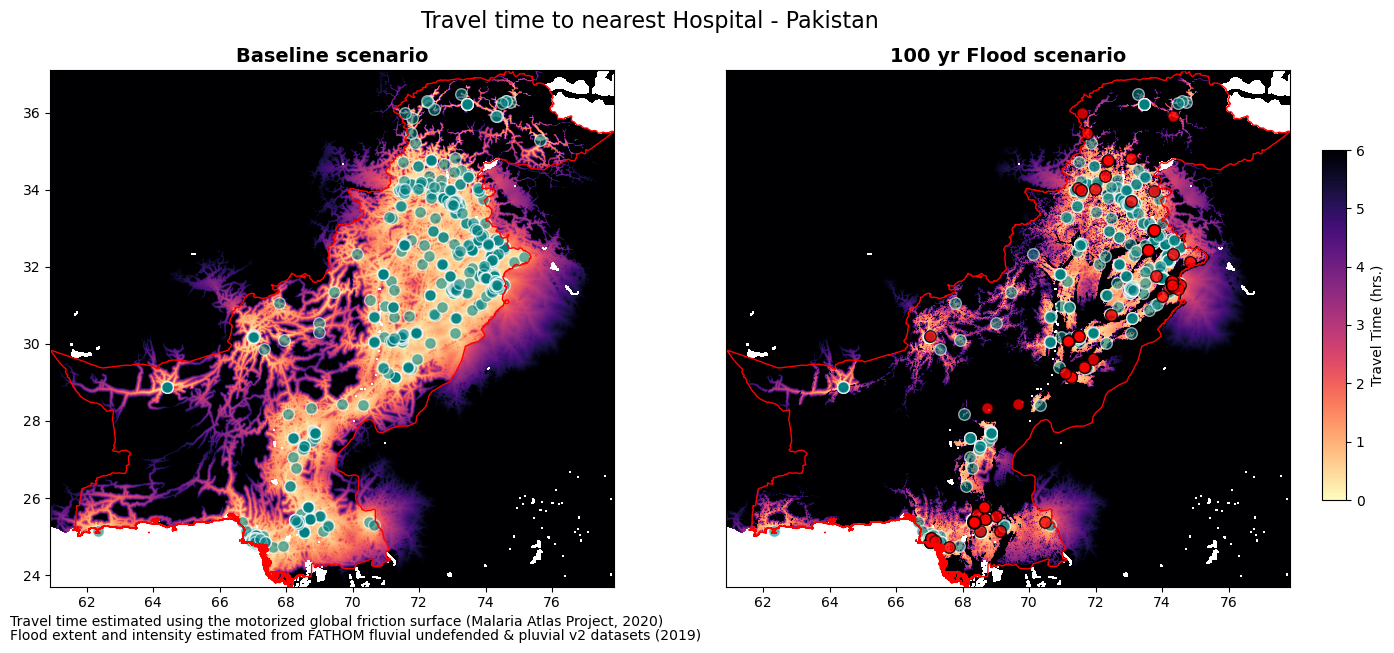

Map Travel Time Results#

# Baseline and Flood scenarios

flood_path = join(out_path, iso, "raster")

files=os.listdir(flood_path)

tt_rio_hf = {}

tt_rio_hosp = {}

for file in files:

if 'tt_hf' in file and 'baseline' in file:

key = file.split('_')[5].split('.')[0]

value = rio.open(join(flood_path,file)) #.read(1)

tt_rio_hf[key] = value

if 'tt_hf' in file and 'flood' in file:

key = file.split('_')[6].split('.')[0]

value = rio.open(join(flood_path,file)) #.read(1)

tt_rio_hf[key] = value

if 'tt_hospitals' in file and 'baseline' in file:

key = file.split('_')[5].split('.')[0]

value = rio.open(join(flood_path,file)) #.read(1)

tt_rio_hosp[key] = value

if 'tt_hospitals' in file and 'flood' in file:

key = file.split('_')[6].split('.')[0]

value = rio.open(join(flood_path,file)) #.read(1)

tt_rio_hosp[key] = value

# Multiple Scenario plot

figsize = (16,7)

fig, ax = plt.subplots(1, 2, figsize = figsize)

fonttitle = {'weight':'bold','size':14}

fig.suptitle("Travel time to nearest Hospital - Pakistan", size = 16, y = 0.95)

ax[0].set_title("Baseline scenario", fontdict=fonttitle)

ax[0].get_xaxis().set_visible(True) # plt.axis('off')

ax[0].get_yaxis().set_visible(True)

ax[1].set_title("100 yr Flood scenario", fontdict=fonttitle)

ax[1].get_xaxis().set_visible(True) # plt.axis('off')

ax[1].get_yaxis().set_visible(False)

ext = plotting_extent(tt_rio_hosp['baseline'])

im = ax[0].imshow(tt_rio_hosp['baseline'].read(1), vmin=0, vmax=6, cmap='magma_r', extent=ext)

geodf_hf_hosp.plot(ax=ax[0], facecolor='teal', edgecolor='white', markersize=70, alpha=0.6)

adm0.plot(ax=ax[0], facecolor="none", edgecolor='red')

FD = "1in100"

ext = plotting_extent(tt_rio_hosp[FD])

im1 = ax[1].imshow(tt_rio_hosp[FD].read(1), vmin=0, vmax=6, cmap='magma_r', extent=ext)

dry_geodf_hf_hosp[FD].plot(ax=ax[1], facecolor='teal', edgecolor='white', markersize=70, alpha=0.6)

flood_geodf_hf_hosp[FD].plot(ax=ax[1], facecolor='red', edgecolor='black', markersize=70, alpha=0.8)

adm0.plot(ax=ax[1], facecolor="none", edgecolor='red')

# divider = make_axes_locatable(ax[1])

# cax = divider.append_axes('bottom', size="5%", pad=0.4) # Adjust pad value for centering

# fig.subplots_adjust(bottom=0.1, top=1, left=0.1, right=0.8, wspace=0.2, hspace=0.25)

# cax = fig.add_axes([.25, 0.15, 0.4, 0.03]) # Left, Bottom, Width, Height

# cb = fig.colorbar(im1, cax=cax, orientation='horizontal')

# cb.set_label("Travel Time (hrs.)")

cax = fig.add_axes([0.92, 0.25, 0.015, 0.5]) # Adjust the position and size of the colorbar here

fig.colorbar(im1, cax=cax, label="Travel Time (hrs.)")

# ctx.add_basemap(ax, source=ctx.providers.CartoDB.Positron, crs='EPSG:4326', zorder=-1)

txt = "Travel time estimated using the motorized global friction surface (Malaria Atlas Project, 2020)"

txt2 = "Flood extent and intensity estimated from FATHOM fluvial undefended & pluvial v2 datasets (2019)"

plt.figtext(0.1, 0.07, txt, wrap=True, horizontalalignment='left', fontsize=10)

plt.figtext(0.1, 0.05, txt2, wrap=True, horizontalalignment='left', fontsize=10)

plt.savefig(join(out_path, iso, "Travel time to nearest Hospital (1in100) - travel_surf.png"), dpi=300, bbox_inches='tight', facecolor='white')

Summarize population within 2 hours from Health Facilities#

pop = pop_surf.read(1, masked=True)

df_pop = pd.DataFrame(zonal_stats(adm1, pop.filled(), affine=pop_surf.transform, stats='sum', nodata=pop_surf.nodata)).rename(columns={'sum':'pop'})

df_pop_hosp = df_pop.copy()

pop_120_hospital = dict()

df_pop_120_hospital = dict()

for key in tt_rio_hosp.keys():

pop_120_hospital[key] = pop*(tt_rio_hosp[key].read(1)<=2)

df_pop_120_hospital[key] = pd.DataFrame(zonal_stats(adm1, pop_120_hospital[key].filled(), affine=pop_surf.transform, stats='sum', nodata=pop_surf.nodata)).rename(columns={'sum':'pop_120_hosp_'+key})

df_pop_hosp = df_pop_hosp.join(df_pop_120_hospital[key])

df_pop_hosp.loc[:, "hosp_pct_"+key] = (df_pop_hosp.loc[:, "pop_120_hosp_"+key]/df_pop_hosp.loc[:, "pop"])*100

res = adm1.join(df_pop_hosp)

res.head(2)

| ID_0 | COUNTRY | ID_1 | ADM1 | VARNAME_1 | NL_NAME_1 | TYPE_1 | ENGTYPE_1 | CC_1 | HASC_1 | ... | pop_120_hosp_1in20 | hosp_pct_1in20 | pop_120_hosp_1in250 | hosp_pct_1in250 | pop_120_hosp_1in10 | hosp_pct_1in10 | pop_120_hosp_1in200 | hosp_pct_1in200 | pop_120_hosp_1in500 | hosp_pct_1in500 | |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

| 0 | PAK | Pakistan | PAK.1_1 | Azad Kashmir | Kashmir | Centrally Administered Area | Centrally Administered Area | PK.JK | ... | 3329775.25 | 84.124949 | 3217804.250 | 81.296063 | 3360736.00 | 84.907155 | 3223334.75 | 81.435788 | 3186742.25 | 80.511298 | ||

| 1 | PAK | Pakistan | PAK.2_1 | Balochistan | Baluchistan|BéloutchistanBeluchistan|Baluchistão | Province | Province | PK.BA | ... | 3036557.75 | 36.645357 | 1896461.625 | 22.886610 | 3401321.75 | 41.047350 | 1921018.00 | 23.182958 | 1732674.75 | 20.910020 |

2 rows × 35 columns

print(f"Summary of % of pop. within 2 hr. of hospital \n {res.hosp_pct_baseline.describe()}")

print(f"Summary of % of pop. within 2 hr. of hospital given 100yr RP floods \n {res.hosp_pct_1in100.describe()}")

Summary of % of pop. within 2 hr. of hospital

count 8.000000

mean 78.728311

std 23.994723

min 38.943175

25% 62.757630

50% 90.125179

75% 96.645953

max 100.000000

Name: hosp_pct_baseline, dtype: float64

Summary of % of pop. within 2 hr. of hospital given 100yr RP floods

count 8.000000

mean 58.546627

std 28.984213

min 15.703214

25% 36.026756

50% 64.095637

75% 77.879992

max 99.954868

Name: hosp_pct_1in100, dtype: float64

Access to health disaggregated by wealth quintile#

Categorize population grid by wealth quintiles, and then summarize the population with access to health (within 2 hours of health facility or hospital)

Hospitals

# Open Relative Wealth index and Population from Facebook

df_fb_rwi = pd.read_csv(os.path.join(data_dir, iso, 'meta', f'{iso.lower()}_relative_wealth_index.csv'))

geodf_fb = [Point(xy) for xy in zip(df_fb_rwi.longitude, df_fb_rwi.latitude)]

geodf_fb = gpd.GeoDataFrame(df_fb_rwi, crs=epsg, geometry=geodf_fb)

geodf_fb = gpd.sjoin(geodf_fb, adm1[['ADM1','geometry']])

df_fb_pop = pd.read_csv(os.path.join(data_dir, iso, 'meta', f'{iso.lower()}_general_2020.csv'))

df_fb_pop = df_fb_pop.rename(columns={f'{iso.lower()}_general_2020': 'pop_2020'})

df_fb_flood_hosp = dict()

for key in tt_rio_hosp.keys():

# Zonal mean of RWI in tt_rio_hosp cells

df_fb_flood_hosp[key] = pd.DataFrame(zonal_stats(geodf_fb, tt_rio_hosp[key].read(1, masked = True).filled(), affine=tt_rio_hosp[key].transform, stats='mean', nodata=tt_rio_hosp[key].nodata)).rename(columns={'mean':'tt_hospital_'+key})

geodf_fb = geodf_fb.join(df_fb_flood_hosp[key])

# Discretize in quantiles

geodf_fb.loc[:, "rwi_cut_"+key] = pd.qcut(geodf_fb['rwi'], [0, .2, .4, .6, .8, 1.], labels=['lowest', 'second-lowest', 'middle', 'second-highest', 'highest'])

# Merge RWI and population by matching the grid (quadkey)

df_fb_pop['quadkey'+key] = df_fb_pop.apply(lambda x: str(quadkey.from_geo((x['latitude'], x['longitude']), 14)), axis=1)

geodf_fb['quadkey'+key] = geodf_fb.apply(lambda x: str(quadkey.from_geo((x['latitude'], x['longitude']), 14)), axis=1)

bing_tile_z14_pop = df_fb_pop.groupby('quadkey'+key, as_index=False)['pop_2020'].sum()

rwi = geodf_fb.merge(bing_tile_z14_pop[['quadkey'+key, 'pop_2020']], on='quadkey'+key, how='inner')

res_rwi_adm0 = pd.DataFrame()

res_rwi_adm1 = pd.DataFrame()

for key in tt_rio_hosp.keys():

# Define boolean proximity within 2hrs from hospital

rwi.loc[:,"tt_hospital_bool_" + key] = rwi['tt_hospital_'+key]<=2

# Aggregate at country level (ADM0)

pop_adm0 = rwi[['rwi_cut_'+key, 'pop_2020']].groupby(['rwi_cut_'+key]).sum()

hosp_adm0 = rwi.loc[rwi["tt_hospital_bool_" + key]==True, ['rwi_cut_'+key, 'pop_2020']].groupby(['rwi_cut_'+key]).sum().rename(columns={'pop_2020':'pop_120_hospital_'+key})

rwi_adm0 = pop_adm0.join(hosp_adm0)

res_rwi_adm0.loc[:, "hospital_pct_"+key] = rwi_adm0['pop_120_hospital_'+key]/rwi_adm0['pop_2020']

# Aggregate at region level (ADM1)

pop_adm1 = rwi[['ADM1','rwi_cut_'+key, 'pop_2020']].groupby(['ADM1','rwi_cut_'+key]).sum()

hosp_adm1 = rwi.loc[rwi["tt_hospital_bool_" + key]==True, ['ADM1','rwi_cut_'+key, 'pop_2020']].groupby(['ADM1','rwi_cut_'+key]).sum().rename(columns={'pop_2020':'pop_120_hospital_'+key})

rwi_adm1 = pop_adm1.join(hosp_adm1)

res_rwi_adm1.loc[:, "hospital_pct_"+key] = rwi_adm1['pop_120_hospital_'+key]/rwi_adm1['pop_2020']

res_rwi_adm0.reset_index(inplace = True)

res_rwi_adm0 = res_rwi_adm0.rename(columns={'rwi_cut_1in5':'quantiles'})

res_rwi_adm1.reset_index(inplace = True)

res_rwi_adm1 = res_rwi_adm1.rename(columns={'rwi_cut_1in5':'quantiles'})

def sort_flood_col(gdf, str_start, str_sep):

# Define str_start and str_sep as the strings that identify the start of column names and the separator with the numerical value

fd_columns = [col for col in gdf.columns if str_start in col]

non_fd_columns = [col for col in gdf.columns if not str_start in col]

fd_num = [(int(col.split(str_sep)[1]), col) for col in fd_columns]

fd_col_sort = [col for _, col in sorted(fd_num)]

new_column_order = non_fd_columns + fd_col_sort

# Reorder the DataFrame columns

gdf = gdf[new_column_order]

return(gdf)

# Melting for seaborn plot

res_rwi_adm0 = sort_flood_col(res_rwi_adm0, "1in", "in")

res_rwi_adm0_long = pd.melt(res_rwi_adm0, id_vars="quantiles", var_name="scen")

res_rwi_adm0_long.value = res_rwi_adm0_long.value*100

scen = res_rwi_adm0_long.scen.unique()

scen = [s.split("_")[2] for s in scen]

res_rwi_adm1 = sort_flood_col(res_rwi_adm1, "1in", "in")

res_rwi_adm1_long = pd.melt(res_rwi_adm1, id_vars=["ADM1","quantiles"], var_name="scen")

res_rwi_adm1_long.value = res_rwi_adm1_long.value*100

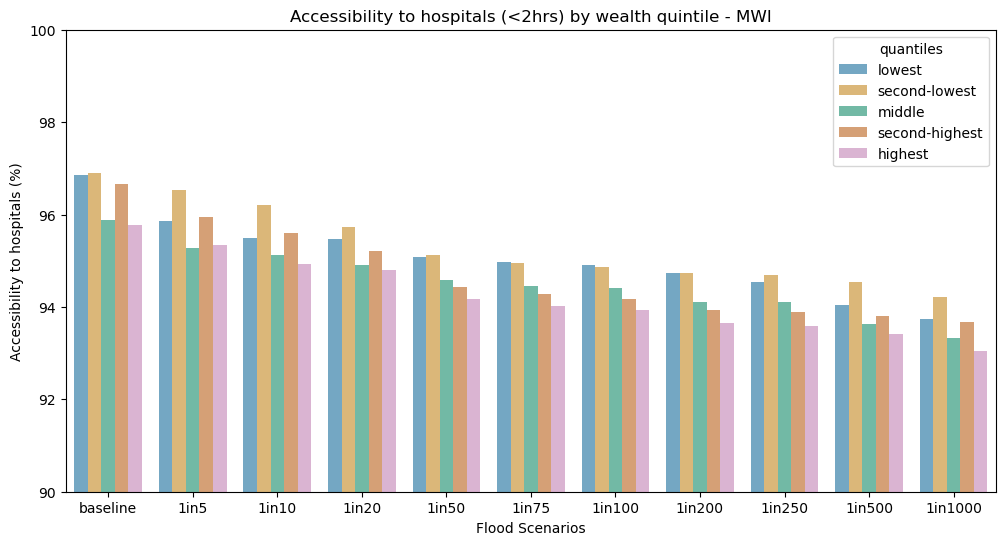

fig = plt.figure(figsize=(12, 6))

ax0 = fig.add_subplot(111)

ax0.set_title("Accessibility to hospitals (<2hrs) by wealth quintile - " + iso)

ax0.set_xticklabels(scen)

ax0.set_xlabel("Flood Scenarios")

ax0.set_ylabel("Accessibility to hospitals (%)")

ax0.set_ylim(90,100)

ax0.legend(loc='upper left', fontsize = 10, title = "RWI quantiles")

ax0 = sns.barplot(

data=res_rwi_adm0_long, hue="quantiles",# errorbar=("pi", 50),

x="scen", y="value",

palette='colorblind', alpha=.6,

)

No artists with labels found to put in legend. Note that artists whose label start with an underscore are ignored when legend() is called with no argument.

res_rwi_adm1["hospital_pct_impact"] = (res_rwi_adm1["hospital_pct_1in1000"] - res_rwi_adm1["hospital_pct_baseline"])/res_rwi_adm1["hospital_pct_baseline"]

test = res_rwi_adm1.copy()

test = test[["ADM1", "quantiles", "hospital_pct_impact"]]

quant = pd.DataFrame(test["ADM1"].unique())

for q in test.quantiles.unique().to_list():

quant["hospital_pct_impact_"+ q] = test[test["quantiles"] == q]["hospital_pct_impact"].values.round(3)*100

quant = quant.rename(columns = {quant.columns[0]:"ADM1"})

quant = quant.merge(right = adm1[["ADM1",'geometry']], on = "ADM1", how = "left")

quant = gpd.GeoDataFrame(quant, crs=epsg, geometry="geometry")

# quant = quant*100

quant.head(2)

| ADM1 | hospital_pct_impact_lowest | hospital_pct_impact_second-lowest | hospital_pct_impact_middle | hospital_pct_impact_second-highest | hospital_pct_impact_highest | geometry | |

|---|---|---|---|---|---|---|---|

| 0 | Balaka | 0.0 | -0.5 | -2.1 | -2.0 | -1.5 | MULTIPOLYGON (((35.07923 -15.30382, 35.07925 -... |

| 1 | Blantyre | -5.5 | -2.1 | 0.0 | -6.6 | -2.1 | MULTIPOLYGON (((34.94884 -15.98430, 34.94793 -... |

import matplotlib.gridspec as gridspec

import cartopy.feature as cfeature

os.environ['CARTOPY_USER_BACKGROUNDS'] = 'C:/Users/wb618081/OneDrive - WBG/Python/Backgrounds/'

figsize = (10,10)

fig = plt.figure(figsize=figsize) #, constrained_layout=True)

projection = ccrs.PlateCarree()

gs = gridspec.GridSpec(2, 6)

fig.suptitle("Sensitivity of Hospitals Accessibility (<2hrs) by wealth quintile (ADM1)", size = 16, y = 0.95)

# fonttitle = {'fontname':'Open Sans','weight':'bold','size':14}

for i, q in enumerate(quant.columns[1:6]):

if i < 3:

ax = fig.add_subplot(gs[0, 2 * i : 2 * i + 2], projection=projection)

else:

ax = fig.add_subplot(gs[1, 2 * i - 5 : 2 * i - 3], projection=projection)

ax.set_title(q.split("_")[3])

ax.get_xaxis().set_visible(True) # plt.axis('off')

ax.get_yaxis().set_visible(True)

cmap = "RdYlGn"

if i == 4:

quant.plot(

ax=ax, column=q, cmap=cmap, legend=True,

alpha=1, linewidth=0.2, edgecolor='black',

# scheme = "user defined", classification_kwds = {'bins': [-0.02,-0.04,-0.6,-0.08,-0.10,-0.12]},

legend_kwds = {

'label': "Reduction of within 2km \n Hospitals accessibility (%)",

# "loc": "upper right",

# "bbox_to_anchor": (2.7, 1),

# 'fontsize': 10,

# 'fmt': "{:.0%}",

# 'title_fontsize': 12

}

)

else:

quant.plot(

ax=ax, column=q, cmap=cmap, legend=False,

alpha=1, linewidth=0.2, edgecolor='black',

# scheme = "naturalbreaks", classification_kwds = {'bins': [-0.02,-0.04,-0.6,-0.08,-0.10,-0.12]},

legend_kwds = {

'title': "Reduction of within 2km \n Hospitals accessibility (%)",

"loc": "upper right",

"bbox_to_anchor": (2.7, 1),

'fontsize': 10,

'fmt': "{:.0%}",

'title_fontsize': 12

}

)

# ax.background_img(name='NaturalEarthRelief', resolution='high', extent = [32.5, 36, -18, -9])

# plt.savefig(os.path.join(scratch_dir, "Health_Access.png"), dpi=150, bbox_inches='tight', facecolor='white')

fig = plt.figure(figsize=(12, 6))

ax0 = fig.add_subplot(111)

ax0.set_title("Accessibility to hospitals (<2hrs) by wealth quintile")

ax0.set_xticklabels(scen)

ax0.set_xlabel("Flood Scenarios")

ax0.set_ylabel("Accessibility to hospitals (%)")

ax0.set_ylim(90,100)

ax0.legend(loc='upper left', fontsize = 10, title = "RWI quantiles")

ax0 = sns.barplot(

data=res_rwi_adm1_long, hue="quantiles",# errorbar=("pi", 50),

x="scen", y="value",

palette='colorblind', alpha=.6,

)